Roblox is equipping parents with more oversight power for their young players, introducing new blocking and monitoring tools to their recently revamped parental control settings.

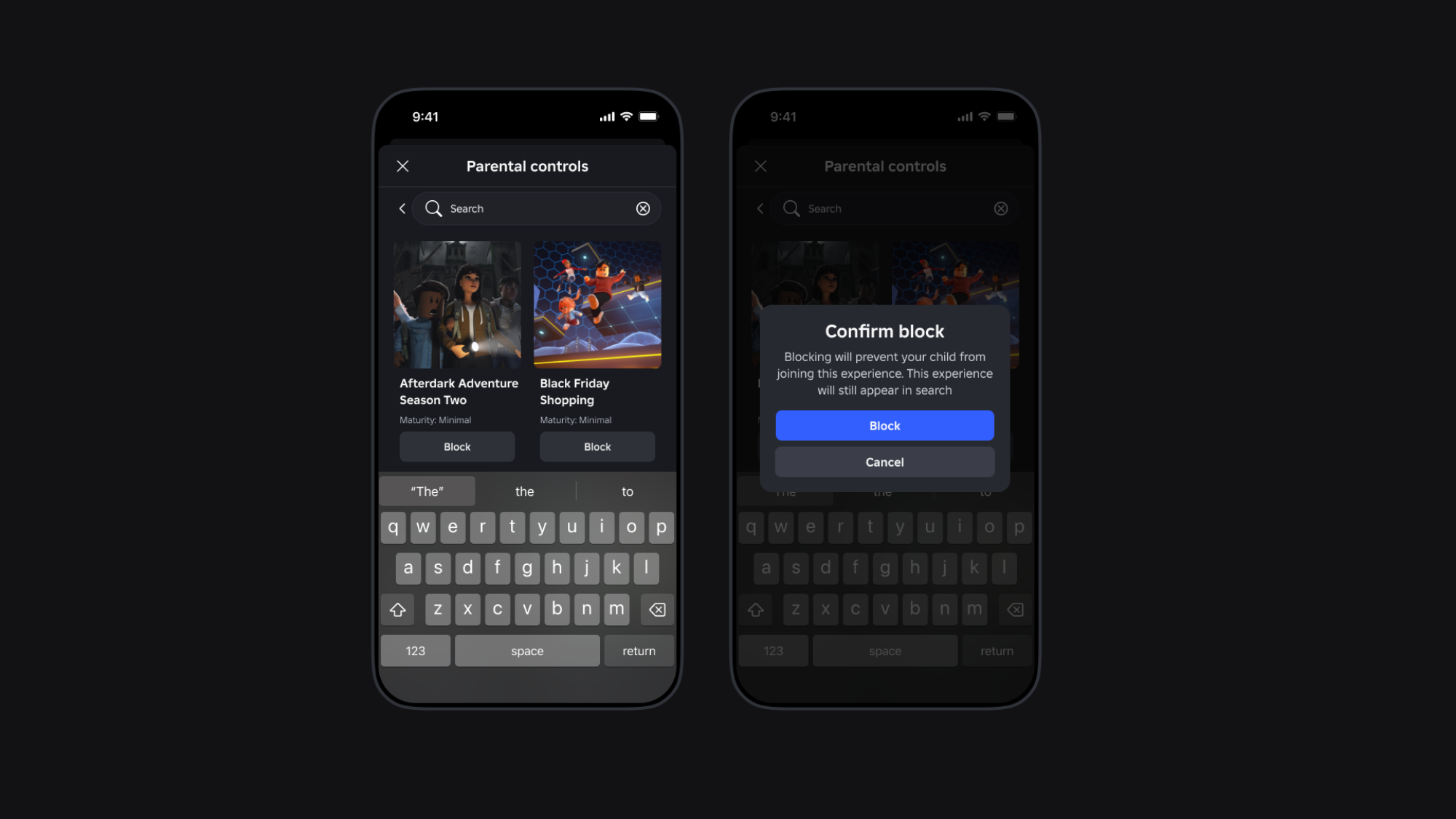

The update includes new options for parents seeking to block and report specific friends on their child’s friends lists, as well as individual experiences — until this update, parents could only prevent children from accessing experiences by blocking that entire maturity level. Children under the age of 13 can request to unblock a friend or experience at any time, but it must be approved by the parent.

Credit: Roblox

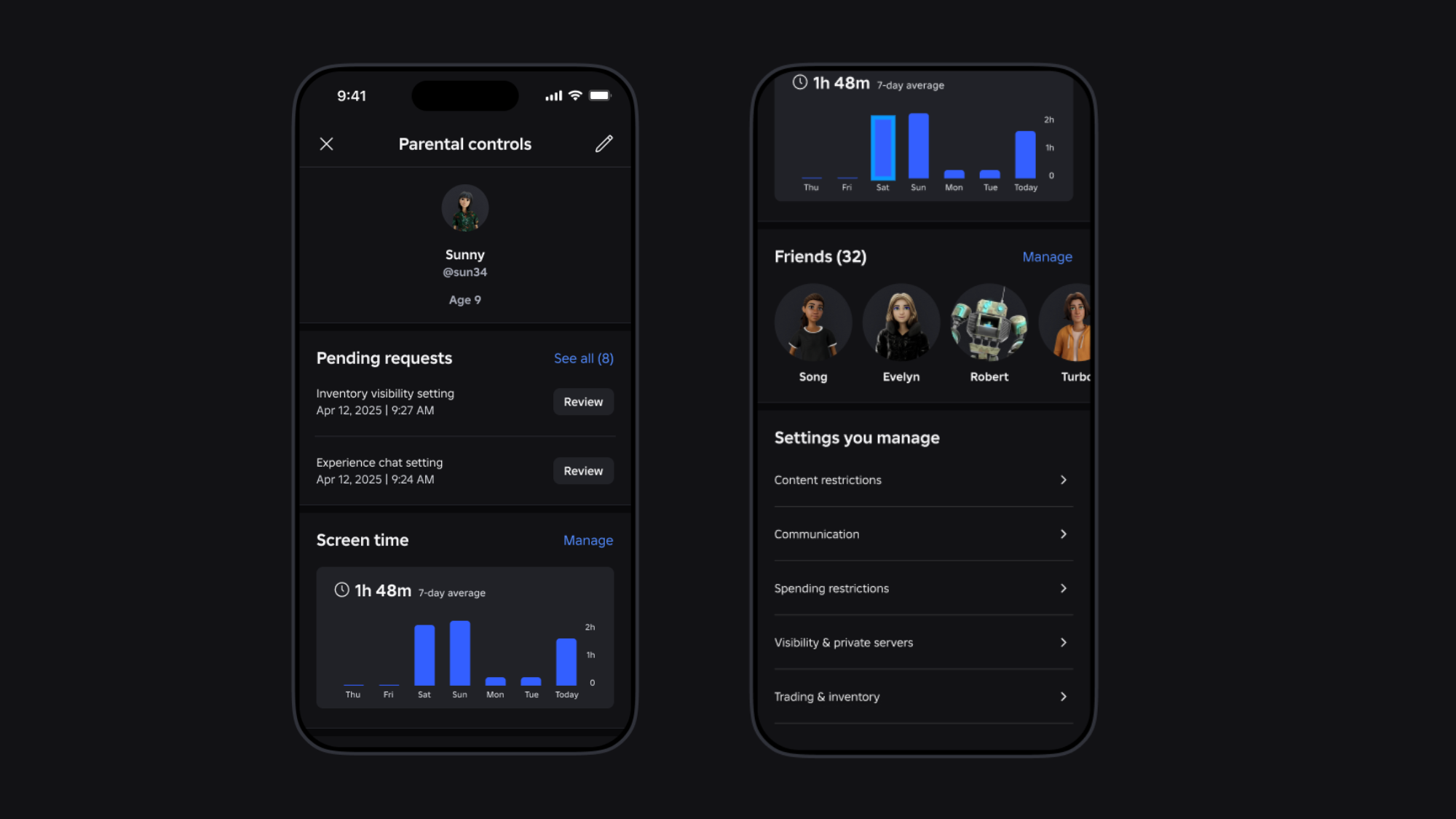

Parents can also find more detailed weekly insights into their children’s activity, including their child’s top 20 most-visited experiences over the last week. They can access additional safety resources on Roblox’s updated Safety Center, as well.

In addition, the platform announced the launch of its updated open-source voice safety classifier, a large classification model designed to help detect and classify toxic voice chats. The updated version — used to moderate “millions of minutes of voice chat per day,” the company explains — joins the open source child safety effort known as ROOST, founded by Roblox and other tech companies like Google, OpenAI and Discord.

Credit: Roblox

“Providing tools [like] blocking specific friends and Roblox experiences, along with visibility into how long their child is playing Roblox games, enables parents to better support their children’s safe use of the platform,” said Larry Magid, CEO of ConnectSafely, a tech safety nonprofit that has partnered with Roblox. “Safety, fun, and adventure are not mutually exclusive.”

In November, Roblox announced a suite of new safety features, including ID-verified parent accounts and remote monitoring tools like screen time limits, restrictions on games based on maturity level, and access to friend lists. The platform also scrapped the ability for children under the age of 13 from directly messaging other users, as well as from messaging fellow players while in a Roblox experience.

These changes followed an outpouring of concern from parents and child safety advocates over Roblox’s alleged hosting of inappropriate content, as well as predation and grooming of child users, despite advertising itself as a platform for children — in 2023, a group of parents sued the gaming site for negligent misrepresentation and false advertising.

The company later added new safety rules and features, including expanded parental controls. According to Roblox chief safety office Matt Kaufman, the platform introduced more than 40 safety updates in 2024.

If you are a child being sexually exploited online, or you know a child who is being sexually exploited online, or you witnessed exploitation of a child occur online, you can report it to the CyberTipline, which is operated by the National Center for Missing Exploited & Children.